=UrlProperty(A1,"query","a") => "b"Īdding a fourth parameter queryValue with property ´query´ allows you to set the queryValue of a queryParam in an url. Open URL Address: Downloads the HTML page from the HTTP address that you. Otherwise returns the value of specified query parameter. AddrView allows you to parse HTML pages and extract most URL addresses stored. It works with all standard links, including with non-English characters if the link includes a trailing / followed by. If no queryParam is specified the entire querystring is returned. This tool will extract all URLs from text. Return the extension of the file referenced in the url if applicable.

Returns the file name referenced in the url if applicable.

Returns the path referenced in the url if applicable. =UrlProperty(A1,"fragment") => "fragment" Returns the fragment (location within a resource) of the url.

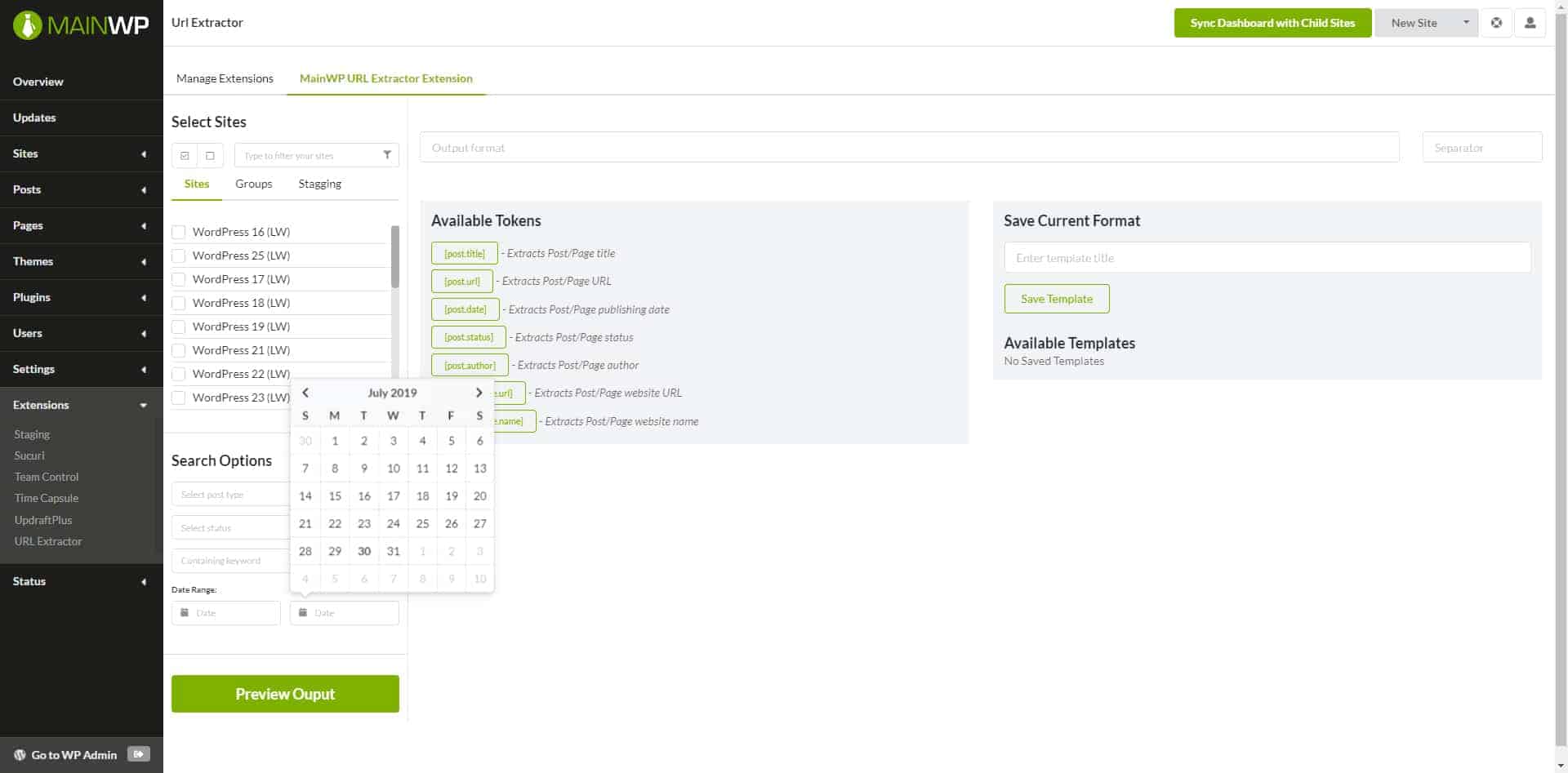

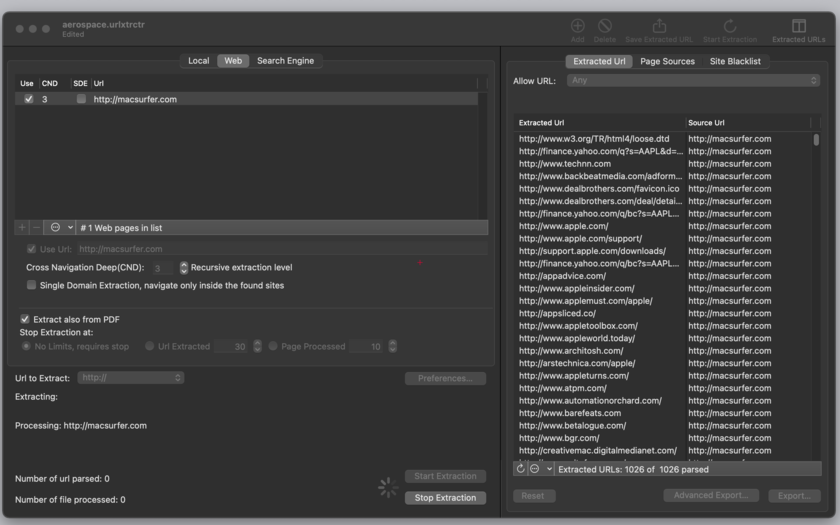

Returns the second level domain if applicable together with top level domain. Returns the origin of the url (schema + port) =UrlProperty(A1,"origin") => "" The URL Extractor bot helps in extracting all the subdomains of a given domain web portal URL, it accepts the destination path from the user to publish the subdomains into a CSV file as output and expects a fully qualified URL / Domain name. UrlProperty is aware that some top level domain have second level domains (such as co.uk). =UrlProperty(A1,"absolute") the host of the url. lynx -listonly -dump the following examples this url is used as reference Absolute Ignore URLs starting with an IP IPv4 and IPv6 supported and validated. Add the word domain: in front of extracted domains or URLs disavow file option. Keep entries starting with domain: in the results disavow file option. Lynx a text based browser is perhaps the simplest. Extract root domain from subdomain validates against existing domain name suffices and ignores the rest. Running the tool locallyĮxtracting links from a page can be done with a number of open source command line tools.

#HTTP URL EXTRACTOR MANUAL#

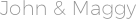

When is a tool useful Gather all links from a large file and be sure not to miss any, as is possible with a manual search. Next, copy the resulting text from the adjacent window or upload the file. Fill in the settings and click the 'Extract' button. The API is simple to use and aims to be a quick reference tool like all our IP Tools there is a limit of 100 queries per day or you can increase the daily quota with a Membership. Copy the text you want to change and paste it into the box. Rather than using the above form you can make a direct link to the following resource with the parameter of ?q set to the address you wish to extract links from. API for the Extract Links ToolĪnother option for accessing the extract links tool is to use the API. It was first developed around 1992 and is capable of using old school Internet protocols, including Gopher and WAIS, along with the more commonly known HTTP, HTTPS, FTP, and NNTP. Being a text-based browser you will not be able to view graphics, however, it is a handy tool for reading text-based pages. Lynx can also be used for troubleshooting and testing web pages from the command line. This is a text-based web browser popular on Linux based operating systems. The tool has been built with a simple and well-known command line tool Lynx. From Internet research, web page development to security assessments, and web page testing. Reasons for using a tool such as this are wide-ranging. Filters can be used to decide what to accept or exclude The extracted URL will be ready to be saved on disk for later use for any purpose. The user can watch, during extraction, the URLs filling the table as they are extracted. Listing links, domains, and resources that a page links to tell you a lot about the page. URL Extractor can work attended or in batch mode extracting for hours from the web in a completely autonomous mode. This tool allows a fast and easy way to scrape links from a web page. def extracturls(self, isbinary: bool False): Extract urls including http, file, ssh and ftp Args: isbinary (bool, optional): The state is in binary. No Links Found About the Page Links Scraping Tool